Why the Road to the Orbital Cloud Data Center Runs Through a SmallSat

SatNews Editorial Analysis

Before we dream of floating data centers, we must understand why the SmallSat is the only laboratory that matters.

As the industry prepares for the SmallSat Symposium, we stress-test one of space’s buzziest ideas, orbiting computing, against the unforgiving constraints of SmallSats.

Welcome to The Fractal Lab: a three-part series on why orbital computing succeeds or fails first at 10 kilograms, not 10,000.

Read Part 1: The Physics Trap

Part 2: The Data Gravity Dilemma

[Recap]: In Part 1, we established that space is an insulator, not a coolant. The thermal constraints of vacuum engineering force a hard ceiling on compute density. Now, we turn to the second bottleneck: getting data to the machine.

In Part 2 of this series, we examine the second bottleneck facing the Orbital Data Center (ODC) : Data Gravity. We will explore why moving exabytes to and from orbit is harder than processing them, and how this specific constraint fractures the market into two mutually exclusive compute types: the high-speed “Real-Time Reduction Hub” and the massive “Batch Trainer.” We will also examine other computing challenges unique to space.

Bandwidth, Latency, and the Great Schism

You cannot refine oil if you cannot get it to the refinery, and uplinking exabytes of training data from Earth via radio is prohibitively slow. Even as adaptive optics mature to solve the last mile of atmospheric turbulence, optical links still struggle to match the sheer bandwidth density of physical media.

This reality bifurcates the future ODC market into two distinct compute types.

Compute 1: Edge Computing & The Real-Time Reduction Hub

Rather than trying to beam terrestrial data up, these stations will ingest raw sensor data from adjacent satellites via optical links. They will process the flood of hyperspectral or radar imagery in orbit, downlinking only the refined insights. In this model, the cloud doesn’t go to space; the Edge does.

The economic case is immediate. A constellation generating 10 terabits per second of raw Earth-observation data cannot afford to downlink it all. As a recent whitepaper notes, the vast majority of this sensor collection is ‘Dark Data‘, information collected continuously but never used because it cannot be transmitted in time to be relevant. Reducing this flood to 100 gigabits of insights in orbit cuts ground-station infrastructure costs by 100×.

Compute 2: The Batch Trainer

Radical launch affordability forces us to revisit a famous networking adage: “Never underestimate the bandwidth of a station wagon full of tapes hurtling down the highway.”

In the Starship era, we simply replace the station wagon with a rocket. Launching ten tons of shrink-wrapped commercial SSDs could theoretically deliver an exabyte-scale training dataset for $200 per kilogram, bypassing the bandwidth bottleneck entirely.

However, physics likely forecloses on this “Orbital Station Wagon.” To achieve exabyte scale, this model relies on the ultra-dense NAND flash found in consumer electronics, hardware that utilizes charge traps highly vulnerable to radiation. We cannot simply swap in radiation-hardened memory; doing so would destroy the density advantage that makes the mission worth launching.

We are therefore left gambling on commercial reliability, and the odds are poor. In one high-performance computing experiment on the International Space Station, 11 of 20 commercial SSDs failed within the first year of flight. That attrition rate makes the economics of a ‘Station Wagon’ architecture nearly impossible to close.

The Great Schism: Monoliths vs. Swarms

Important to our examination of the potential ODC compute applications, we must also examine ODC design. In doing so, it is important to acknowledge the macroeconomic shift that makes an ODC theoretically possible: the collapse of launch costs.

For the last sixty years, aerospace engineering has been governed by a single tyranny: Mass is Money. With launch costs hovering between $10,000 and $2,000 per kilogram, engineers spent millions of dollars to shave grams off structure and electronics. The Space X Falcon 9 redefined launch affordability, but it still throws away the upper stage each time. Starship is designed so the entire rocket can be reused, like an airplane, and it carries much more cargo per flight. Reusing everything and launching more mass at once is what could drop launch costs from thousands of dollars per kilogram to the hundreds.

If fully reusable heavy-lift vehicles achieve their target price points (<$200/kg), mass ceases to be the primary constraint. For the first time, it becomes economically viable to solve engineering problems by simply adding more material—more steel, more fluid, more shielding.

This permission to be heavy is the catalyst for the current divergence in ODC design. It has emboldened two radically different philosophies on how to solve the thermal bottleneck.

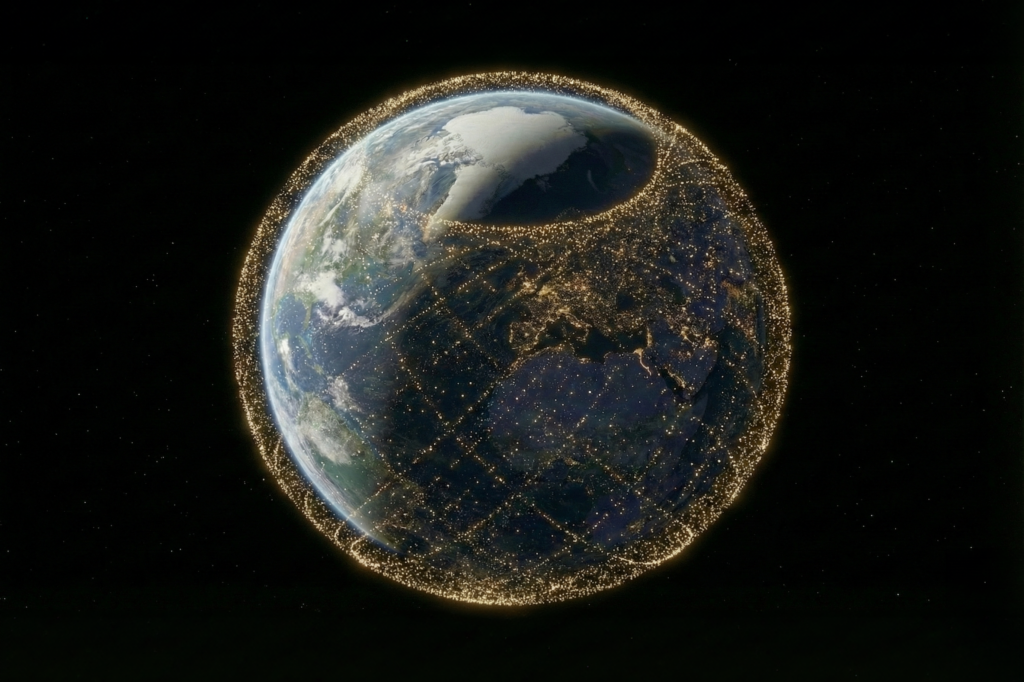

To handle these workloads while respecting the thermal limits discussed in Part 1, two opposing philosophies emerged… These two philosophies had remained largely theoretical until now. On January 30, 2026, SpaceX filed an FCC application for one million satellites explicitly targeting “AI inference” and “Orbital Data Centers.” SpaceX proposes up to one million satellites operating between 500–2,000 km, including sun-synchronous inclinations, to support large-scale AI inference. This filing transforms the architectural debate from whiteboard exercise to regulatory reality. More importantly, it provides the perfect test case: if swarm architecture cannot survive contact with a million-unit scale, the entire distributed model collapses. Let’s examine the architectural options.

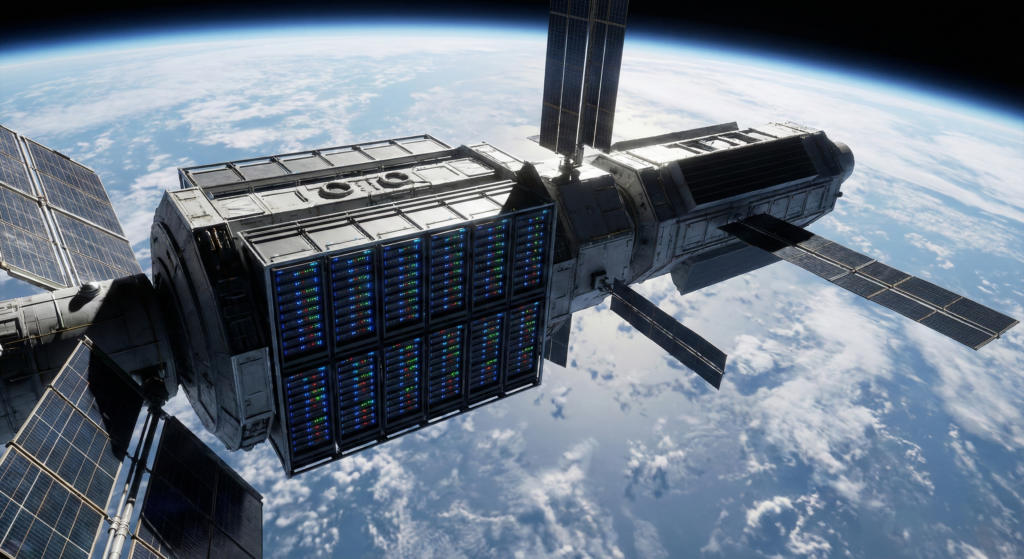

Architecture A: The Monolith (“The Floating Building”)

A small number of very large, pressurized spacecraft house dense server racks, much like terrestrial data centers moved into orbit. These systems rely on active cooling—pumps, fluid loops, and heat exchangers—to move waste heat from processors to radiators.

Advocates argue that dramatically cheaper launch costs, enabled by vehicles like Starship, make this approach viable by allowing operators to launch massive radiators, heat sinks, or coolant systems to brute-force the thermal problem.

The reality: Cheap launch solves capital cost, not system complexity. Massive active cooling loops introduce hundreds of mechanical failure points (pumps, seals, valves). Furthermore, adding mass doesn’t solve the fundamental inefficiency of radiating at low temperatures; it just makes the graveyard heavier.

Architecture B: The Swarm (The Flying Tile)

To mitigate against the cooling complexity introduced in monolithic data centers, a second logical architecture has emerged. Instead of a massive datacenter, thousands of small, flat, 12U-class server “tiles” operate as a distributed system, flying in loose formation rather than as a single structure. Each tile spreads heat over a large surface area, enabling mostly passive cooling through radiation.

By maximizing surface-area-to-volume ratio, each node can shed heat efficiently without complex mechanical systems, trading hardware simplicity for software coordination.

The SpaceX Filing: When Swarm Scale Becomes the Enemy

SpaceX’s application reveals three critical specifications:

- One million satellites

- Sun-Synchronous Orbits (SSO) between 500–2,000 km

- Explicit targeting of “AI inference”

This is swarm architecture at civilization scale. It also exposes why swarm assumptions break at scale, not gradually, but catastrophically.

The Geometry Trap

SpaceX’s reliance on Sun-Synchronous Orbit suggests they aim to utilize “dawn-dusk” orbits, riding the terminator line for perpetual sunlight. But here’s the problem: the dawn-dusk terminator is a single orbital plane at any given altitude. One million satellites cannot physically occupy a single plane without creating a collision cascade.

Physics forces a choice. Spread satellites across multiple SSO planes to achieve safe spacing, and the majority will cycle through darkness for several minutes of every orbit. Maintain perpetual sunlight, and satellite density in the privileged plane becomes untenable. The filing proposes both unlimited power and safe spacing. Orbital mechanics permits one or the other, not both.

The satellites forced into eclipse inherit a new constraint: they become battery farms. Every satellite must carry sufficient energy storage to maintain AI inference workloads through shadow. Whether that’s 1 kilogram of batteries or 5 kilograms per satellite is an engineering detail. The systemic outcome is not: a constellation optimized for compute becomes one optimized for energy storage, complete with the thermal penalties of high-rate battery discharge.

SpaceX’s application does not address how satellites will be distributed across planes, what battery capacity is provisioned, or how thermal loads during eclipse will be managed. These omissions aren’t minor, they’re the difference between a viable architecture and an aspirational filing.

The Collision Dilemma

Placing one million satellites in shells as high as 2,000 km (where atmospheric drag is negligible) is an aggressive bet on reliability. At 2,000 km, debris stays up for millennia. A collision cascade (Kessler Syndrome) in this high-density shell wouldn’t just disable the data center; it would permanently close off much of LEO for future use. This transforms orbital computing from a commercial experiment into a civilization-level risk.

The Topology Challenge

In a swarm orbital data center, each satellite acts as a node, a solitary flying router responsible for handing off data to its neighbors. Topology is the invisible, constantly shifting wiring diagram that maps the connections between them, determining which nodes are linked at any given millisecond as they orbit the Earth. In orbital swarms, network partitions are unavoidable. This forces designers to confront the CAP theorem, which states that a distributed system can only guarantee two of three properties at once: Consistency (all nodes see the same data), Availability (the system always responds), and Partition tolerance (the system keeps working despite communication failures). In space, partition tolerance is non-negotiable, so operators must choose between perfect consistency and constant availability.

While SSO solves the 240-millisecond latency floor of Geostationary orbit, it introduces a deadlier problem for real-time compute: Jitter. In a terrestrial data center, a packet travels over fixed fiber routes with predictable timing. In a million-satellite swarm, the network topology changes every second. A packet sent from London to New York might hop across 15 different satellites one moment, and 22 the next, as orbital planes drift and links break. This variability (jitter) is lethal for synchronous applications like high-frequency trading or parallelized model training. The ODC in LEO may be low latency on average, but its unpredictable variance permanently disqualifies it from the interactive workloads that pay the highest premiums.

AI inference for “billions of users,” implies a level of connectivity that defies current space networking technology. A swarm of one million satellites creates one million contention points. In this topology, network partitions are not errors; they are the default state. The computational overhead of keeping a distributed database consistent across one million moving nodes, which are constantly handing off connections, is staggering. We risk building a system that spends more energy managing its own topology than it spends computing user data.

The SpaceX filing confirms that they are betting on swarm architecture. But it does not disprove the constraints. If the only way to build an ODC is to launch a million individually constrained, battery-heavy SmallSats, then we are not building a Cloud. We are building the world’s largest, most expensive edge network and hoping the physics of heat and batteries don’t catch up with us.

The Radiation Issue

The SpaceX filing confirms swarm architecture as a likely forthcoming industry consensus. But even if SpaceX solves spacing, batteries, and topology, one final constraint applies to every architecture: radiation. Regardless of orbit, modern AI hardware faces a fatal contradiction: performance requires fragility. AI training is memory-bandwidth limited, forcing architectures to rely on High Bandwidth Memory (HBM). HBM achieves its speed through dense interposers, 3D-stacked dies, and aggressive operating voltages, the exact features that make silicon susceptible to radiation. You cannot simply swap in hardened chips; legacy rad-hard memory is too slow to feed modern accelerators.

While SSO avoids the continuous frying pan of Geostationary orbit, it introduces a different killer near the poles.. Because SSO is a polar orbit, satellites must pass through the Earth’s magnetic poles every ~95 minutes, funneling them through the “horns” of the radiation belts. Furthermore, the SpaceX filing proposes shells as high as 2,000 km, placing hardware directly inside the Inner Van Allen Belt, a zone of trapped high-energy protons.

The math is unforgiving. Commercial HBM often hits a reliability cliff at 10–15 krad of Total Ionizing Dose (TID). In the proposed 2,000 km shells, a satellite could accumulate that dose in less than a year, creating a sharp reliability cliff where dense memory stacks degrade rapidly.

Economically, this is decisive. Avoiding this cliff requires massive lead shielding (which kills the launch arbitrage) or accepting a mission life measured in months rather than years. Neither option aligns with the capital efficiency assumptions of an orbital cloud, turning radiation from an engineering nuisance into a fundamental business-model killer.

Coming Up:

We have examined the physics, discussed logical compute types and looked at possible architectures. But does the ODC business case actually close? In Part 3, we run the numbers on “Electron Arbitrage” and the fatal flaw of hardware lock-in.