On Monday, March 16, 2026, the launch of the first commercially operational “Orbital Cloud” marked a definitive shift from simple data transport to in-situ processing.

For decades, the primary constraint of space operations has not been the ability to collect data, but the capacity to return it to Earth. As modern sensors generate petabytes of high-resolution imagery and signals intelligence, they have collided with the “downlink bottleneck”—a physical limit imposed by oversubscribed radio frequency (RF) bands and limited ground station windows.

The Rise of the Orbital Data Center (ODC)

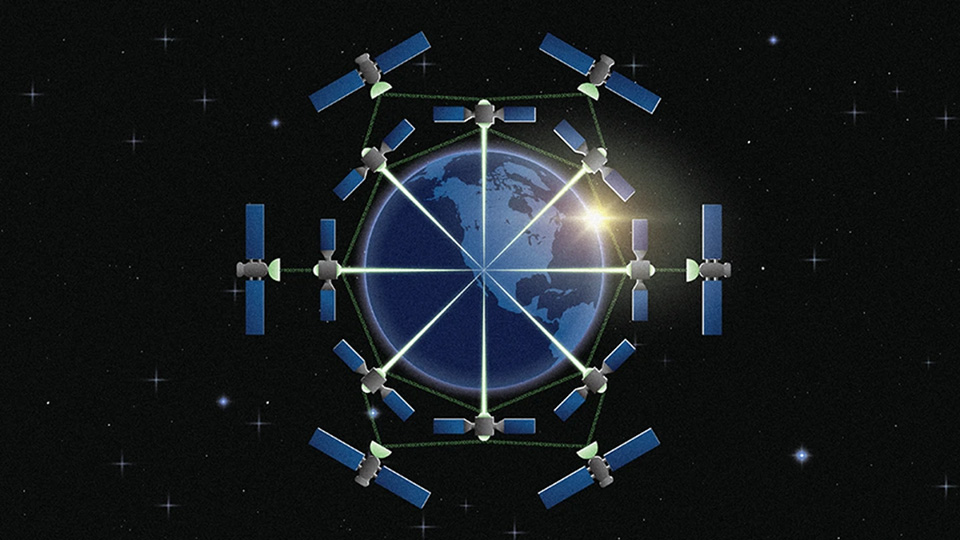

The emergence of Orbital Data Centers (ODCs) addresses the download bottleneck by moving the compute to the data, rather than the data to the compute. This architectural shift was solidified in early 2026 with the commissioning of distributed compute nodes across Kepler Communications’ Tranche 1 constellation.

Kepler Communications’ constellation was launched on Monday, March 16, 2026. This milestone transitions Kepler’s network from a high-speed data transport layer into a scalable, cloud-native processing environment, allowing customers to execute AI-driven workloads directly in orbit rather than relying on ground-based data centers.

‘By integrating NVIDIA-powered edge GPUs with optical inter-satellite links (OISLs), these platforms allow for real-time data reduction. Instead of downlinking thousands of raw images, an ODC can run a computer vision model in orbit and only transmit the specific coordinates of a detected object.

Hardware Evolution: From Radiation-Hardened to Radiation-Tolerant

The current generation of orbital compute represents a departure from traditional “exotic” space hardware. By leveraging high-performance, commercial-off-the-shelf (COTS) components protected by advanced software redundancy and localized shielding, operators have significantly increased flops-per-watt in orbit.

Current Operational Hardware Specs (2026):

- Compute Modules: 40 NVIDIA Jetson Orin modules (Kepler Tranche 1); NVIDIA H100 GPUs (Starcloud-1).

- Connectivity: 2.5 Gbps optical relay links, expanding to 100 Gbps in future tranches.

- Architecture: Cloud-native, IP-based mesh networks allowing for dynamic workload shifting between nodes.

This hardware enables “Agentic AI” in space—autonomous systems capable of making mission-critical decisions, such as altering a satellite’s orbit to avoid debris or re-tasking sensors based on detected anomalies, without waiting for a ground command.

Why Space Compute Wins

The move toward space-based AI is driven by three primary factors:

- Latency: Real-time applications, such as wildfire tracking or missile defense, cannot afford the minutes-long delay of traditional “store-and-forward” relay systems.

- Terrestrial Power Limits: On Earth, data centers are straining national power grids. In Sun-Synchronous Orbit (SSO), satellites can access nearly continuous solar energy, bypassing terrestrial electricity bottlenecks.

- Data Sovereignty: Processing data in a secure, orbital environment provides a layer of physical and jurisdictional security for sensitive government and commercial workloads.

“Intelligence must live wherever data is generated,” said Jensen Huang, founder and CEO of NVIDIA. “AI processing across space and ground systems transforms orbital data centers into instruments of discovery and spacecraft into self-navigating systems.”

Regulatory and Environmental Outlook

Despite the technical progress, the rapid proliferation of ODCs has raised concerns regarding orbital congestion. Calculations from January 2026 suggest the margin for error in active satellite management has shrunk significantly, with thousands of new compute-heavy satellites planned by SpaceX and Starcloud.

The next 24 months will be defined by the “Starship Effect”—as launch costs continue to drop toward the $200/kg threshold, the economic argument for hyperscale orbital deployments becomes undeniable. The focus will now shift from proving the hardware works to standardizing the “inter-satellite backplane” that will connect these floating data centers into a seamless extension of the global cloud.