SatNews Editorial Analysis

Before we dream of floating data centers, we must understand why the SmallSat is the only laboratory that matters.

Welcome to The Fractal Lab: a three-part series on why orbital computing succeeds or fails first at 10 kilograms, not 10,000.

Read Part 1: The Physics Trap | Read Part 2: The Data Gravity Dilemma

Part 3: The Economic Mirage

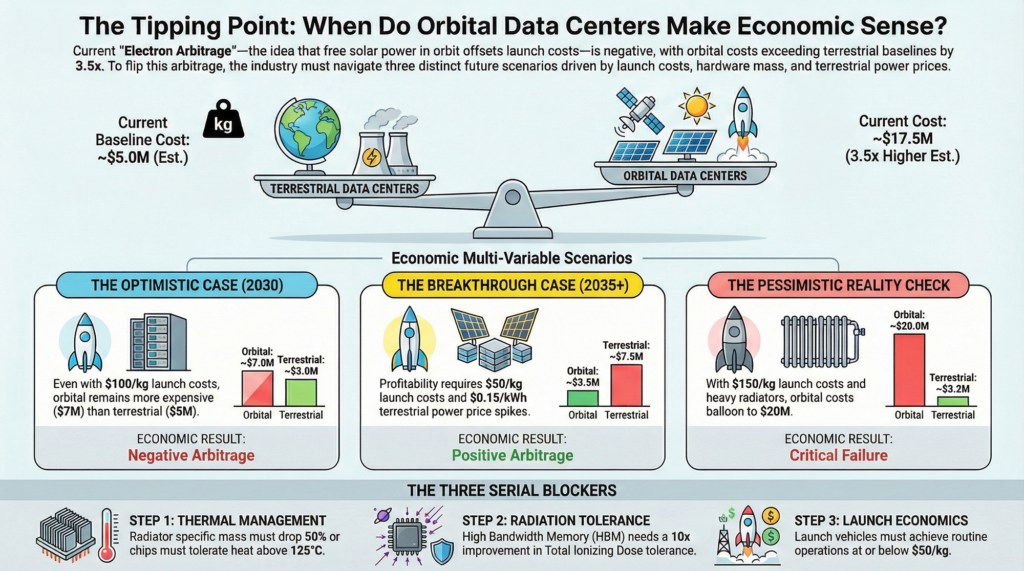

[Recap]: In Part 1 and Part 2, we outlined the thermal ceilings and architectural schisms of orbital computing. We established that space is an insulator, not a coolant; that data gravity bifurcates the market into edge reduction hubs and batch trainers; and that both monolithic and swarm architectures face fundamental engineering constraints. Finally, we must ask the question that kills most space startups: Does the arbitrage actually pay for the launch?

The Electron Arbitrage

Ultimately, the viability of any Orbital Data Center will be decided by economics. Specifically, by what we might call electron arbitrage: whether the savings from “free” solar power in orbit can offset the enormous cost of putting the hardware there in the first place. If orbital electricity is cheap enough to produce a computational dollar for less than a terrestrial grid charges, the business case opens. If it is not, no amount of engineering elegance can save the venture.

The pitch is seductive. Terrestrial data centers face genuine and worsening constraints. Grid interconnection queues in the United States now average five years, with some developers reporting wait times of seven years or longer. The U.S. interconnection queue held over 2,600 GW of proposed capacity by late 2024 (more than twice the country’s installed generating capacity) and only about 13% of projects that entered the queue between 2000 and 2019 had reached commercial operation. Permitting for new high-voltage transmission lines can take a decade; the U.S. built only 180 miles of high-voltage transmission annually in the most recent two-year period, down from 1,700 miles per year a decade earlier. Regional power markets are tightening as AI training facilities compete with electrification mandates for finite grid capacity. In some markets, hyperscalers are signing power purchase agreements at premiums that would have been unthinkable five years ago.

Meanwhile, a solar array in Geostationary Orbit receives approximately 1,360 watts per square meter of solar irradiance, roughly six to eight times what a terrestrial installation receives when averaged over day-night cycles and weather. Space solar array specific power varies widely: NASA’s dataset of flown missions clusters around 30 W/kg, while state-of-the-art rigid arrays achieve approximately 200 W/kg. For a next-generation deployable array at megawatt scale, a specific power of 100 W/kg is a reasonable projection, aggressive relative to heritage systems but conservative relative to laboratory demonstrations. At that figure, a 1-megawatt array masses roughly 10,000 kilograms. At the aspirational Starship launch cost of $200 per kilogram, that represents a $2 million capital expenditure for the power system alone.

Two million dollars for a megawatt of generation capacity that never sees a cloud, never experiences nightfall (outside brief seasonal eclipses in GEO), and never requires a utility interconnection agreement. On its face, the economics appear compelling. But the power plant is only half the story.

The Napkin Math: Earth vs. Orbit

To stress-test the arbitrage, we must compare the total cost of operating equivalent compute capacity on the ground and in orbit. We deliberately construct both cases with care, avoiding the common trap of comparing orbital worst-case to terrestrial best-case.

The Terrestrial Baseline (1 MW, 5-Year Operation)

A terrestrial data center’s operating cost is dominated by electricity, cooling, and facility overhead. But the true cost of a modern hyperscale deployment includes several line items that back-of-envelope calculations frequently omit:

| Cost Component | Estimate | Notes |

| Grid Electricity | $2.2M | $0.05/kWh × 1MW × 8,760h × 5yr |

| Cooling & Mechanical | $0.8M | PUE overhead, amortized chillers |

| Land & Permitting | $0.5M | Site lease, environmental review |

| Grid Interconnection | $0.8M | Substation upgrades, queue fees |

| Network & Connectivity | $0.4M | Dark fiber, IX peering |

| Total Terrestrial (5yr) | ~$4.7M | Honest baseline |

This honest terrestrial baseline of approximately $4.7 million is notably higher than the $3.2 million figure that omits land, permitting, and interconnection costs. We include these because they represent real capital that must be deployed, and because the tightening of grid access is one of the primary arguments orbital advocates cite in favor of the space alternative.

The Orbital Station (1 MW, GEO, 5-Year Mission)

The orbital cost model must account for every system required to generate, utilize, and reject a megawatt of compute power in vacuum:

| System | Mass (kg) | Cost @ $200/kg | Assumptions |

| Solar Array (1MW) | 10,000 | $2.0M | 100 W/kg specific power |

| Thermal Radiators | 30,000 | $6.0M | ~3,000 m² @ 10 kg/m² deployed |

| Spacecraft Bus & Structure | 8,000 | $1.6M | ADCS, power conditioning, C&DH |

| Non-Recurring Engineering | — | $2.5M | Design, test, qual (conservative) |

| Station-Keeping (5yr) | 1,500 | $0.3M | Electric propulsion consumables |

| Insurance & Regulatory | — | $1.5M | ITU filing, launch insurance, liability |

| Ground Segment (5yr) | — | $2.0M | Mission ops, telemetry, anomaly response |

| Decommission & Deorbit | 500 | $0.5M | Graveyard orbit maneuver, passivation |

| Total Orbital (5yr) | ~50,000 | ~$16.4M | Conservative estimate |

A note on the radiator mass assumption: the 10 kg/m² figure assumes deployable panel structures including honeycomb substrates, heat pipes, deployment mechanisms, and structural reinforcement for stiffness in the thermal environment. Current state-of-the-art deployable radiators achieve 5–8 kg/m² for modest areas, but scaling to 3,000 m² introduces structural challenges that push the mass fraction higher. Rigid-panel radiators would be significantly heavier; lightweight membrane radiators are theoretical.

The Gap

Even with an honest terrestrial baseline that accounts for grid constraints, the orbital station’s 5-year capital expenditure exceeds the terrestrial equivalent by a factor of 3.5×. And this comparison is generous to the orbital case in several ways: it assumes Starship achieves its target pricing, it excludes the cost of the compute hardware itself (which is equivalent in both scenarios), and it does not account for the reduced computational throughput caused by radiation-induced errors and thermal throttling in orbit.

The arbitrage is negative. “Free” solar power does not compensate for the infrastructure required to use it. But a negative arbitrage today does not necessarily mean a negative arbitrage forever. Perhaps the curves will cross. This is where the second killer emerges.

The Hardware Lock-In Problem

The napkin math reveals a negative arbitrage today. Advocates argue that this is a timing problem. Launch costs will continue to fall, terrestrial power costs will continue to rise, and the curves will eventually cross. This argument has a fatal flaw that no amount of cost reduction can fix: hardware obsolescence. Even if the arbitrage flips positive in 2030, the machine you launched in 2027 is already losing the race.

Terrestrial data centers operate on a relentless refresh cycle. AI accelerators are replaced every two to three years as new architectures deliver 2–3× performance-per-watt improvements. Memory generations turn over every three to four years. Even in conventional enterprise computing, a five-year server lifecycle is considered long. The economics of cloud computing are built on the assumption that hardware is a consumable, not an asset.

An orbital station cannot participate in this cycle. The hardware launched in 2027 will still be the hardware running in 2032 or 2035. During that period, terrestrial competitors will have refreshed their accelerators two or three times, each generation compounding performance advantages. By year five, the orbital hardware is not merely outdated; it is economically irrelevant, offering a fraction of the performance-per-watt available on the ground.

The Cost of a Refresh

Some advocates propose robotic servicing as the solution: autonomous arms that swap server blades like cartridges, refreshing the compute hardware without deorbiting the station. In principle, this is elegant. In practice, it transforms a data business into a logistics business, and the economics are punishing.

Consider what an orbital servicing mission requires: a dedicated spacecraft capable of rendezvous and proximity operations, a robotic manipulation system, a payload of replacement server modules designed for on-orbit insertion, and the ground operations team to execute the mission. The only operational precedent is Northrop Grumman’s Mission Extension Vehicle, which provides life-extension services to GEO communications satellites. According to Intelsat’s SEC filings, MEV service costs approximately $13 million per year, roughly $65 million over a five-year engagement, and the MEV performs a far simpler task than hot-swapping server racks: it merely provides station-keeping propulsion to an otherwise functional satellite.

An ODC hardware refresh mission would be vastly more complex: rendezvous, docking, pressurized module access, robotic blade extraction and insertion, thermal loop reconnection, and validation. Even if Starship-class logistics reduce the launch component to $5–10 million, the spacecraft, robotics, and operations overhead would plausibly push total mission cost to $30–50 million. For a 1 MW station generating perhaps $3–5 million per year in compute revenue, a single refresh mission consumes a full decade of revenue. The math does not close.

Until there is a “UPS Truck for Orbit,” a reusable servicing vehicle that operates weekly at marginal cost, the orbital station is economically committed to its launch-day hardware. Without cheap, routine logistics, the system is locked into 2027-era silicon until decommission, competing against terrestrial facilities running 2032-era accelerators. This is not a gap that “free” power can bridge.

The Refresh Penalty

To appreciate the severity of the lock-in, consider a concrete scenario. Assume a hypothetical ODC launches in 2027 equipped with accelerators delivering 1,000 TFLOPS per chip at 500W. By 2030, terrestrial competitors deploy chips at 3,000 TFLOPS per chip at 400W, a 3.75× improvement in performance-per-watt, consistent with recent generational leaps from NVIDIA and AMD. By 2032, the gap widens to 8–10×.

The orbital station’s “free” power advantage (saving roughly $0.05/kWh versus terrestrial rates) generates approximately $440,000 per year in energy savings for a 1 MW facility. But the performance-per-watt disadvantage means the station requires 4–10× more watts to deliver equivalent compute by mid-mission. Watts it does not have, because its solar array and thermal systems were sized for launch-day hardware. The station does not merely fall behind; it becomes structurally incapable of competing.

The Edge Paradox

So the monolith fails on economics. The swarm fails on physics. The hardware lock-in kills both on time. One might argue that the middle ground survives.

The Real-Time Reduction Hub described in Part 2 (Compute 1) is the most intellectually honest use case for orbital computing: a station that ingests raw sensor data from adjacent Earth-observation satellites, processes it in orbit, and downlinks only refined insights. No terrestrial data to upload. No latency-sensitive users to serve. Just orbital data, processed where it is collected. It is a compelling pitch, and it contains a hidden, fatal paradox.

Why the Hub Loses Its Market

The entire premise of the Reduction Hub depends on a gap: the sensor satellite generates more data than it can process or downlink, creating demand for a centralized orbital processor. But this gap is closing, rapidly, from below.

In 2020, a typical Earth-observation SmallSat carried no onboard inference capability. Raw imagery was downlinked in bulk to ground stations for processing. By 2024, satellites like those in the OroraTech and Satellogic constellations began flying with onboard AI accelerators capable of performing cloud detection, change detection, and basic classification at the edge. On the sensor satellite itself.

A 5-watt onboard accelerator that can identify and discard cloud-covered frames before transmission eliminates 60–80% of the downlink burden without any external processing. A 10-watt accelerator capable of running lightweight inference models, including object detection, anomaly flagging, and spectral classification, can reduce the useful data to a fraction of the raw collection.

This trajectory points toward a clear conclusion: as onboard processing improves, the sensor satellite becomes its own edge processor. The volume of data that requires external processing shrinks with each hardware generation, and the business case for a dedicated orbital hub shrinks with it.

The Federated Middle Ground

The one surviving niche for orbital processing is what might be called “Federated Fusion,” a smaller aggregator node that receives pre-processed insights from multiple sensor satellites and combines them into a composite product. For example, fusing synthetic aperture radar data with electro-optical imagery and AIS ship-tracking data to produce a maritime domain awareness picture that no single sensor can generate alone.

This is a real capability with real customers: defense and intelligence agencies, maritime insurers, commodity traders. But it is a fundamentally different machine than the “AWS in Space” currently being pitched over cocktails at satellite conferences. It is a specialized, modest-power aggregation node, tens of kilowatts rather than megawatts, and it looks far more like an upgraded SmallSat than a floating data center.

The paradox is complete: the strongest surviving use case for orbital computing is one that a SmallSat can already serve. The hub’s customer is building its own replacement.

The Latency Exception

At this point, the honest reader might conclude that orbital computing is entirely illusory. It is not. There is one class of workloads where the economic comparison is irrelevant, because the alternative does not exist.

Certain applications require computational results faster than a round-trip to the ground permits. Space domain awareness (tracking debris and predicting collisions) requires autonomous maneuver decisions within seconds. Military applications involving satellite-to-satellite coordination cannot tolerate the latency or the vulnerability of a ground-station relay. Autonomous rendezvous and proximity operations, whether for servicing, inspection, or active debris removal, require real-time guidance that is physically impossible to provide from the ground with acceptable margins.

For these workloads, the question is not “is orbital compute cheaper than terrestrial?” but “can the mission be accomplished at all without onboard processing?” The answer is frequently no. And the compute required is typically modest: single-digit to tens of watts, running specialized inference models or guidance algorithms. This is not a market that justifies gigawatt infrastructure. It is a market that justifies smart SmallSats.

This is not a trivial distinction. The latency exception is real, growing, and commercially significant. But it reinforces, rather than undermines, the fractal lab thesis: the platforms that will serve this market are 10–100 kilogram spacecraft, not 10,000-kilogram stations.

The SpaceX Filing: A Cost Reality Check

If the latency exception defines the ceiling of viable orbital compute, SpaceX’s January 2026 FCC filing proposes to blow through it. In Part 2, we examined the orbital mechanics and networking challenges of that filing, including up to one million AI inference satellites. Here, we must examine what it implies economically, because the numbers are staggering.

Even an extremely optimistic unit economics model reveals the scale of the capital commitment:

| Parameter | Estimate |

| Satellites (12U-class, ~20 kg each) | 1,000,000 units |

| Total constellation mass | ~20,000,000 kg (20,000 metric tons) |

| Launch cost @ $200/kg | $4 billion |

| Unit manufacturing @ $50K/sat (aggressive) | $50 billion |

| Ground segment & operations (5yr) | $5–10 billion |

| Total Capital Commitment | $59–64 billion (before a single inference is run) |

Sixty billion dollars. For context, this exceeds the total capital expenditure of any single terrestrial hyperscaler’s data center buildout in any given year. It approaches the cumulative investment in the entire commercial satellite industry over the past decade. And it must be committed before the constellation generates a single dollar of inference revenue.

The manufacturing assumption deserves scrutiny. At $50,000 per satellite, we are assuming automotive-scale production economics for spacecraft, a production rate of roughly 200,000 units per year over a five-year deployment. Starlink has demonstrated that satellite mass production is possible, but Starlink’s v2 Mini satellites are optimized for a fundamentally different mission (broadband relay) than AI inference, which demands denser compute, more thermal management, and potentially more radiation shielding.

Even if SpaceX achieves these economics, the constellation faces the same hardware lock-in problem at civilization scale. One million satellites launched in 2028 are one million obsolete computers by 2033. The replacement cycle requires launching another million, another $60 billion, while deorbiting the old fleet. The “just launch more” argument does not escape the refresh trap; it compounds it into the most expensive planned obsolescence in human history.

Conclusion: The Fractal Lab Wins

If we are honest about the numbers, and this series has tried to be, the orbital data center market looks less like AWS in space and more like a niche for scientific computing, latency-critical in-orbit decision-making, and data fusion for defense and intelligence customers. These are real markets. They are not small markets. But they are not the gigawatt-scale AI training facilities that dominate conference keynotes and investor decks.

Technology pivots are often cited as potential saviors. Neuromorphic processors that mimic biological neural networks could theoretically reduce heat per operation by an order of magnitude. Analog computing architectures that perform matrix multiplication in the physics of resistor arrays promise similar efficiency gains. But the timelines for space-qualified neuromorphic and analog processors are measured in decades, not years, and even optimistic projections place commercial availability of space-rated neuromorphic chips no earlier than the mid-2030s.

More importantly, the neuromorphic argument reinforces the central thesis rather than undermining it. If we need entirely new, unproven chip architectures to make the economics work, where will those chips be tested first? Not on a $60 billion constellation. Not on a pressurized monolith that takes five years to design and build. They will be tested on a SmallSat—a 10-kilogram, $500,000 mission that can fail on a Tuesday and fly a redesign by Thursday. That is where flight heritage is born.

This is the fractal lab at work.

What the SmallSat Is Already Proving

The technologies being validated today on commercial SmallSat missions are not adjacent to the ODC problem—they are identical to it. Deployable radiators being tested on 12U and 16U CubeSats are miniature versions of the thermal management systems any orbital station would require. ASIC-based onboard processors pushing the boundaries of watts-per-TFLOP in compact thermal envelopes are solving the same compute-density challenges at a scale where failure is instructive rather than catastrophic. Inter-satellite optical links being demonstrated on Starlink and other LEO constellations are the backbone technology for any distributed orbital compute architecture.

Every one of these subsystems must achieve flight heritage before it can be trusted at scale. And flight heritage is accumulated not in simulation, not in thermal vacuum chambers, but in orbit—on SmallSats.

The Path Forward

The road to the gigawatt cloud, if it exists at all, is paved with 10-kilogram experiments. Whether that road leads to a viable market or a graveyard of PowerPoint architectures will be determined not by vision, not by FCC filings, and not by the ambitions of any single company. It will be determined by the physics of heat rejection, the economics of electron arbitrage, and the relentless obsolescence of silicon.

If orbital computing succeeds at scale, it may not look like the spaceborne hyperscale cloud we imagine today. It may instead resemble a thousand SmallSats quietly solving the problems that megastructures cannot—each one a fractal laboratory, testing at small scale the technologies that will either vindicate or bury the dream of computing in the void.

Appendix: Sensitivity Analysis

When Does the Arbitrage Flip?

The baseline comparison shows orbital CapEx of ~$16.4M vs. terrestrial costs of ~$4.7M (a 3.5× gap). Below we examine what must change for the arbitrage to become positive, and how likely each parameter shift is by 2030.

| Parameter | Baseline | Break-Even | Likelihood | Notes |

| Launch Cost ($/kg) | $200 | <$50 | Medium | Starship targets this; proven reliability at scale remains uncertain |

| Radiator Mass (kg/m²) | 10 | <2.5 | Low | Requires revolutionary deployable structures; current SOA is 5–8 kg/m² |

| Terrestrial Power ($/kWh) | $0.05 | >$0.20 | Low–Med | Only in energy-constrained markets (Singapore, Japan) or grid emergencies |

| Rad-Hard Premium | 10–50× | <2× | Very Low | Current rad-hard memory is far too expensive with lower performance |

| Mission Lifetime (yr) | 5 | >15 | Low | Requires solving HBM radiation tolerance; worsens lock-in problem |

| Compute Refresh (yr) | 2–3 | >10 | Very Low | Industry trend is toward faster refresh for AI accelerators |

| Optical Downlink (Gbps) | 100 | >10,000 | Medium | Needed for batch model; technically feasible but undemonstrated at scale |

The critical insight from this analysis is that no single parameter shift closes the gap. Even if launch costs drop to $50/kg (a 4× improvement over baseline), the radiators and ground operations alone keep orbital costs above terrestrial. Closing the arbitrage requires simultaneous breakthroughs across at least three independent parameters, a conjunction of advances that, while not impossible, should temper expectations for any near-term business case.

The one scenario that could fundamentally change the calculus is a sustained, structural increase in terrestrial power costs driven by AI demand outstripping grid expansion. If U.S. wholesale electricity prices for data center customers rise above $0.15–$0.20/kWh and remain elevated for a decade, the electron arbitrage begins to approach break-even, but only if launch costs fall simultaneously. This is the scenario that ODC advocates are implicitly betting on, and it is the one parameter where the trend line offers genuine, if uncertain, support.

Multi-Variable Scenarios

The sensitivity table examines parameters in isolation. Real outcomes depend on how they combine. Three scenarios illustrate the range of plausible futures.

Optimistic Case (2030)

| Parameter | Value |

| Launch cost | $100/kg |

| Radiator specific mass | 5 kg/m² |

| Terrestrial power cost | $0.10/kWh |

| Result | Orbital ~$7M vs. Terrestrial ~$5M. Still negative, but closing. |

Breakthrough Case (2035+)

| Parameter | Value |

| Launch cost | $50/kg |

| Radiator specific mass | 3 kg/m² (advanced gossamer structures) |

| Terrestrial power cost | $0.15/kWh (grid saturation) |

| Mission life | 10 years (neuromorphic chips, lower rad sensitivity) |

| Result | Orbital ~$3.5M vs. Terrestrial ~$7.5M. Positive arbitrage. |

Pessimistic Case (Reality Check)

| Parameter | Value |

| Launch cost | $150/kg (Starship delays, regulatory burden) |

| Radiator specific mass | 12 kg/m² (operational ISS-class hardware) |

| HBM shielding | Adds 50% mass penalty |

| Result | Orbital ~$20M vs. Terrestrial ~$3.2M. Economics worsen. |

The breakthrough case is instructive. Positive arbitrage requires not just cheaper launch and lighter radiators, but a simultaneous increase in terrestrial power costs and a generational leap in radiation-tolerant chip architectures. Each of these advances is plausible individually. Their conjunction within a single decade is the bet that ODC advocates are making, whether they frame it that way or not.

Critical Path Dependencies

The scenarios above reveal three serial blockers. All three must be solved for the arbitrage to flip. Progress on any two is insufficient if the third remains unsolved.

Thermal Management. Radiator specific mass must drop by 50% or more from current operational hardware, OR operating temperatures must rise significantly, requiring new silicon architectures that tolerate sustained operation above 125°C.

Radiation Tolerance. High Bandwidth Memory must achieve a 10× improvement in Total Ionizing Dose tolerance, OR neuromorphic and analog computing architectures must mature to the point where they can replace HBM-dependent accelerators for inference workloads.

Launch Economics. Starship or an equivalent vehicle must achieve routine operations at $50/kg or below AND maintain a high enough flight rate to support periodic servicing missions at marginal cost.

These dependencies are serial, not parallel. Solving launch economics without solving thermal management simply delivers cheaper hardware to a system that still cannot cool itself. Solving radiation tolerance without solving launch economics produces chips that work in space but cost too much to get there. The path to a viable ODC runs through all three gates, and the SmallSat is the laboratory where each gate is tested.

Acknowledgments

This series was co-authored by Abbey White, Evan Grey, and Simon Payne, with technical oversight from Dr. Paul Struhsaker, whose rigor ensured the analysis stayed grounded in defensible engineering. Any remaining errors are our own.